The consistent availability of good data provides a high-quality springboard for the critical interpretation and geological modelling phases of the resource evaluation. Fifteen-to-20 years ago the reliability of this data would often have been in question, adding a further layer of risk to an already uncertain modelling task. The skills of the geology modellers – geophysicists, geochemists and explorationists – remain at a high level. What has changed is that, perhaps for the first time, the geological and resource modelling tools available are able to do justice to the interpreted models. The number of compromises to the desired interpretation required for the sake of imperfect technology is rapidly declining.

Geological risk

How is geological risk being assessed today, and how has its treatment changed in the past 10-15 years? Many of the principles of assessing geological modelling risk have not changed in this time. One of the tools available to the geologist to capture or qualify the potential risk in interpretation and modelling includes generating alternative interpretations of the geological controls and mineralised zones. These can be created in a number of ways: by providing the geological framework and data to a person different to the geologist that generated the first interpretation, by removing a subset of the data (either at random or systematically) and seeing how much the model would change, or by testing alternative (but still plausible) genetic hypotheses.

Another approach to considering geological modelling risk is to expose the project to a peer review with the specific objective of challenging the assumptions. This provides a qualitative – but still valuable – view on the extent to which things ‘could be wrong’. A risk minimisation tool, somewhat different to risk quantification, is to seek a geological analogue for the mineralisation under evaluation, either a local analogue or a globally-recognised deposit type. All of these techniques for looking at geological modelling risk have been practised for many years, and all are still valid and useful.

Digital tools

What has changed in the past decade, and particularly in the past five years, is the advent of digital tools to quickly and accurately generate and evaluate alternative geological models. Undoubtedly, one of the leaders in this field has been ARANZ Geo, which originated and grew around the development and marketing of the Leapfrog suite of mining and energy products. Originally developed for the medical imaging and movie special effects markets, and based upon an algorithm to quickly interpolate scattered 3-D data, Leapfrog has developed rapidly in both popularity and sophistication.

"What has changed is that, perhaps for the first time, the geological and resource modelling tools available are able to do justice to the interpreted models

"

While the Leapfrog technology has moved from other fields into minerals, there has also been cross-fertilisation from the oil industry, where (until recently anyway) money for R&D has always been less of a problem than in the relatively impoverished mining sector. One product from the oil sector which is having a significant impact in minerals evaluation is GoCad from Paradigm Software. Originally developed to deal with sparse petroleum and gas data, together with dense geophysical data, GoCad has been extended to provide a range of geological modelling tools to work with the denser but more diverse data sets available in the minerals industry.

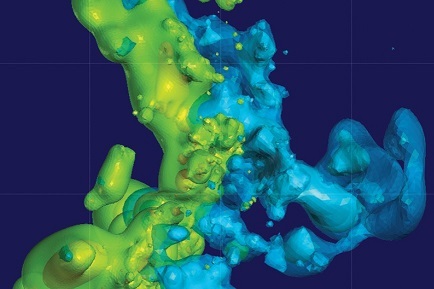

Alteration domains modelled with implicit method

Two of the key features of these products are implicit modelling and data integration. Implicit modelling is the generation of solids or surfaces which honour one or more features of a data set with considerably reduced manual intervention – but, critically, still with a degree of geologically-motivated intercession. For example, the typical approach to modelling a grade zone related to propylitic alteration in a porphyry copper deposit would be to slice the deposit on a series of section or plan views. Then, perhaps painstakingly, it would be necessary to interpret the combination of the alteration and copper assays as a series of two (or two-and-a-half) dimensional outlines which would then be joined together in three dimensions as a solid.

Implicit modelling, using a variety of interpolation algorithms, will generate the required 3-D solid with much less input from the user. Naturally the key to a successful outcome is to specify the interpolation parameters such that the implicit model replicates what would be achieved, albeit in a much greater timeframe, with a manual 2-D approach. The more easily one is able to incorporate and interpret the required geometrical constraints, the greater the productivity gain.

The other advantage of modern geological modelling software is its ability to integrate multiple sets of data, both vector (data with quantity and a spatial attribute) and raster (2-D data), and to allow this data to contribute, directly or indirectly, to the solid geological or mineralisation model. Thus a 3-D seismic survey may be converted directly into a set of faults in the same coordinate space as the sample data set.

A measure of the importance of this suite of products is the amount of money being spent on R&D, not only by the companies themselves but by the majors through sponsorship and ‘guided development’. The amount being spent is large for the mining industry and is particularly notable in such distressed times. Clearly it is perceived by many that there is a significant commercial and technical advantage to be gained through the combination of advanced geological modelling tools and expert geological knowledge.

Reducing geological risk

So how do these rapidly changing old and new tools help to depict and ultimately reduce geological risk? The key is the productivity gain in generating a single model or realisation of the geology or mineralisation. The application of rapid modelling tools allows for multiple equiprobable scenarios to be generated and modelled quickly in 3-D. Taken together, this set of possible geological/mineralisation models goes some way towards bracketing what geostatisticians call the space of uncertainty, that is, the set of all possible geology models available from a given set of data and assumptions.

Naturally, each of these models need to be conditioned to the sample data, as well as the other available data sets – lithological, geophysical, geochemical, weathering, geotechnical, and so on. The exception to this ‘hard’ conditioning is when one or more of the data sets could be considered to be fuzzy or imprecise; for instance, with multiple phases of rock codes over a deposit, there may be considerable uncertainty surrounding a particular code or subset of codes. Nonetheless, this somewhat uncertain data is still of use as long as the fuzziness can be captured and modelled – it all contributes to the space of uncertainty, and geology is, at times, a very uncertain science.

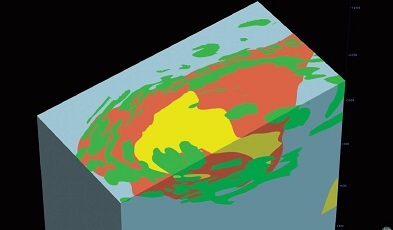

Geology modelling

Another area of rapid development in geological modelling is the relative ease with which solid geological (lithological) models can be constructed, not only models which honour the rock code data but which respect the timing and precedence relationships between lithological units and faults, intrusives, weathering fronts and other features. To construct such a model requires a ‘clean’ data set; in other words, little ambiguity in the meaning and representation of the various rock codes. This cleaning may require quite a data conversion effort, especially in older deposits. The rewards, however, in terms of vectors to mineralisation and communication of observed and interpreted features, should far outstrip the efforts in construction. The ability to break open and slice such ‘whole earth’ models is not to be underestimated.

Improvements in grade estimation

3D solid geological model 'honouring' geological timing relationships

It is worth comparing and contrasting these modelling developments with the improvements in grade estimation over the past 20 years. In the 1990s, putting the appropriate gold (or copper, or sulphur, or nickel) grade in the block and assessing the risk or uncertainty in that grade were not easy tasks. While the algorithms to generate optimal grades in blocks from the surrounding samples have been known and documented since the 1960s (ie, the Kriging equations), successfully implementing these in a useable form which would run in real time (hours, not days) on the hardware of the day was not always possible. Assessing grade risk through conditional simulation has again been theoretically possible since 1970, but it was not really until the early 2000s that both computing power and the incorporation of simulation routines into readily available and user-friendly mining software made it achievable in common practice.

Today, in the second decade of the twenty-first century, it is not an exaggeration to state that both accurate assessment of average grades at the appropriate volume support and, additionally, of the uncertainty in those grades, is routine and commonplace. Grade risk assessment is certainly not as widely-measured as average grade estimates, but most medium- to large-sized mining organisations, and most service organisations, are certainly geared up to assess the grade risk.

Indeed, an assessment of this grade risk, while potentially qualitative, has recently been enshrined in the criteria for classification via Table 1 of the 2012 JORC Code. In other words, and at the risk of over-simplification, the measurement of grade risk is no longer an issue in resource evaluation. The challenge lies in capturing geological modelling risk.

The remainder of this decade may well be seen in retrospect as a golden era in the development of readily available, efficient, geologically accurate and relatively cheap modelling tools. The platform has well and truly been constructed with the development of some of these tools in such products as GoCad and Leapfrog, and the generalised mining package vendors are rushing to introduce implicit modelling and data integration tools in order to maintain relevance. There is clearly some way to go in this particular area of innovation, and future improvements will focus around such areas as the rapid generation of geological models which integrate and honour logged and interpreted lithologies, structures at the meso and macro scales, alteration and weathering zones, geometallurgical domains, 3-D and 4-D seismic interpretations, as well as assay and sample data.

Grade estimation

Grade estimation will be an integral part of the geological model rather than an afterthought that sits uneasily within the interpreted shapes, and the grade modelling will follow the contours of whichever feature or combination of feature layers best control the grade distribution. The connection between geology and geostatistics, often so tenuous in the past, is growing stronger. In addition to the multiple realisations of potential grade currently available to the resource evaluation practitioner, potential geological modelling scenarios can now be integrated. The prize on offer is the front end of an integrated project risk assessment, reflecting the combination of geological and grade uncertainty. In many cases this will represent the majority of project risk, in other instances production risk, processing risk, technological risk, environmental risk and perhaps even climatic or social risk may dominate the technical risks of a project. All of these potential risks ultimately need to be managed and captured. One considerable challenge will be to correctly represent and perhaps sample the potentially vast space of uncertainty represented by these various aspects of risk; however, to not attempt this, given the tools which are becoming available, is to either misunderstand the key drivers of total project risk or to understate the levels of uncertainty involved, leading to potentially poor and costly decisions.

Conclusion

As a final note of caution, while the enhanced geological modelling tools available will make the appreciation and estimation of risk easier, they do not provide an excuse for failing to apply the highest standards of geological skill and knowledge gained through education, training, experience and observation. There is not, and never will be, a magic bullet in this regard.

Ian Glacken FAusIMM(CP) is a director of Australia-based global consulting firm, Optiro. This article was originally published in the AusIMM Bulletin. www.ausimmbulletin.com